Professional-Cloud-Security-Engineer Google Cloud Certified - Professional Cloud Security Engineer Questions and Answers

Your company’s new CEO recently sold two of the company’s divisions. Your Director asks you to help migrate the Google Cloud projects associated with those divisions to a new organization node. Which preparation steps are necessary before this migration occurs? (Choose two.)

You manage one of your organization ' s Google Cloud projects (Project A). AVPC Service Control (SC) perimeter is blocking API access requests to this project including Pub/Sub. A resource running under a service account in another project (Project B) needs to collect messages from a Pub/Sub topic in your project Project B is not included in a VPC SC perimeter. You need to provide access from Project B to the Pub/Sub topic in Project A using the principle of least

Privilege.

What should you do?

Your organization s record data exists in Cloud Storage. You must retain all record data for at least seven years This policy must be permanent.

What should you do?

You have stored company approved compute images in a single Google Cloud project that is used as an image repository. This project is protected with VPC Service Controls and exists in the perimeter along with other projects in your organization. This lets other projects deploy images from the image repository project. A team requires deploying a third-party disk image that is stored in an external Google Cloud organization. You need to grant read access to the disk image so that it can be deployed into the perimeter.

What should you do?

Your organization is using Vertex AI Workbench Instances. You must ensure that newly deployed instances are automatically kept up-to-date and that users cannot accidentally alter settings in the operating system. What should you do?

You want to set up a secure, internal network within Google Cloud for database servers. The servers must not have any direct communication with the public internet. What should you do?

Your company conducts clinical trials and needs to analyze the results of a recent study that are stored in BigQuery. The interval when the medicine was taken contains start and stop dates The interval data is critical to the analysis, but specific dates may identify a particular batch and introduce bias You need to obfuscate the start and end dates for each row and preserve the interval data.

What should you do?

Your organization is using Google Cloud to develop and host its applications. Following Google-recommended practices, the team has created dedicated projects for development and production. Your development team is located in Canada and Germany. The operations team works exclusively from Germany to adhere to local laws. You need to ensure that admin access to Google Cloud APIs is restricted to these countries and environments. What should you do?

You are deploying regulated workloads on Google Cloud. The regulation has data residency and data access requirements. It also requires that support is provided from the same geographical location as where the data resides.

What should you do?

A customer needs to launch a 3-tier internal web application on Google Cloud Platform (GCP). The customer’s internal compliance requirements dictate that end-user access may only be allowed if the traffic seems to originate from a specific known good CIDR. The customer accepts the risk that their application will only have SYN flood DDoS protection. They want to use GCP’s native SYN flood protection.

Which product should be used to meet these requirements?

Your company has multiple teams needing access to specific datasets across various Google Cloud data services for different projects. You need to ensure that team members can only access the data relevant to their projects and prevent unauthorized access to sensitive information within BigQuery, Cloud Storage, and Cloud SQL. What should you do?

You perform a security assessment on a customer architecture and discover that multiple VMs have public IP addresses. After providing a recommendation to remove the public IP addresses, you are told those VMs need to communicate to external sites as part of the customer ' s typical operations. What should you recommend to reduce the need for public IP addresses in your customer ' s VMs?

You are working with protected health information (PHI) for an electronic health record system. The privacy officer is concerned that sensitive data is stored in the analytics system. You are tasked with anonymizing the sensitive data in a way that is not reversible. Also, the anonymized data should not preserve the character set and length. Which Google Cloud solution should you use?

You manage a mission-critical workload for your organization, which is in a highly regulated industry The workload uses Compute Engine VMs to analyze and process the sensitive data after it is uploaded to Cloud Storage from the endpomt computers. Your compliance team has detected that this workload does not meet the data protection requirements for sensitive data. You need to meet these requirements;

• Manage the data encryption key (DEK) outside the Google Cloud boundary.

• Maintain full control of encryption keys through a third-party provider.

• Encrypt the sensitive data before uploading it to Cloud Storage

• Decrypt the sensitive data during processing in the Compute Engine VMs

• Encrypt the sensitive data in memory while in use in the Compute Engine VMs

What should you do?

Choose 2 answers

A customer’s internal security team must manage its own encryption keys for encrypting data on Cloud Storage and decides to use customer-supplied encryption keys (CSEK).

How should the team complete this task?

You have a highly sensitive BigQuery workload that contains personally identifiable information (Pll) that you want to ensure is not accessible from the internet. To prevent data exfiltration only requests from authorized IP addresses are allowed to query your BigQuery tables.

What should you do?

You have been tasked with configuring Security Command Center for your organization’s Google Cloud environment. Your security team needs to receive alerts of potential crypto mining in the organization’s compute environment and alerts for common Google Cloud misconfigurations that impact security. Which Security Command Center features should you use to configure these alerts? (Choose two.)

Your organization has Google Cloud applications that require access to external web services. You must monitor, control, and log access to these services. What should you do?

You have been tasked with inspecting IP packet data for invalid or malicious content. What should you do?

You must ensure that the keys used for at-rest encryption of your data are compliant with your organization ' s security controls. One security control mandates that keys get rotated every 90 days. You must implement an effective detection strategy to validate if keys are rotated as required. What should you do?

You need to enable VPC Service Controls and allow changes to perimeters in existing environments without preventing access to resources. Which VPC Service Controls mode should you use?

Your global defense company is migrating top-secret classified data to BigQuery and Cloud Storage. National security regulations demand that master encryption key material never leaves the accredited on-premises cryptographic hardware. You must retain the unilateral ability to revoke data access, independent of any cloud provider. What should you do?

All logs in your organization are aggregated into a centralized Google Cloud logging project for analysis and long-term retention.4 While most of the log data can be viewed by operations teams, there are specific sensitive fields (i.e., protoPayload.authenticationinfo.principalEmail) that contain identifiable information that should be restricted only to security teams. You need to implement a solution that allows different teams to view their respective application logs in the centralized logging project. It must also restrict access to specific sensitive fields within those logs to only a designated security group. Your solution must ensure that other fields in the same log entry remain visible to other authorized groups. What should you do?

Your organization uses a microservices architecture based on Google Kubernetes Engine (GKE). Security reviews recommend tighter controls around deployed container images to reduce potential vulnerabilities and maintain compliance. You need to implement an automated system by using managed services to ensure that only approved container images are deployed to the GKE clusters. What should you do?

Your Google Cloud organization allows for administrative capabilities to be distributed to each team through provision of a Google Cloud project with Owner role (roles/ owner). The organization contains thousands of Google Cloud Projects Security Command Center Premium has surfaced multiple cpen_myscl_port findings. You are enforcing the guardrails and need to prevent these types of common misconfigurations.

What should you do?

Your organization strives to be a market leader in software innovation. You provided a large number of Google Cloud environments so developers can test the integration of Gemini in Vertex AI into their existing applications or create new projects. Your organization has 200 developers and a five-person security team. You must prevent and detect proper security policies across the Google Cloud environments. What should you do? (Choose 2 answers)

You run applications on Cloud Run. You already enabled container analysis for vulnerability scanning. However, you are concerned about the lack of control on the applications that are deployed. You must ensure that only trusted container images are deployed on Cloud Run.

What should you do?

Choose 2 answers

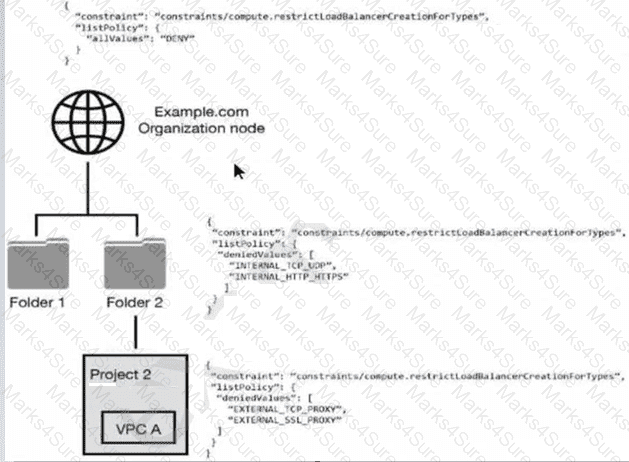

You have the following resource hierarchy. There is an organization policy at each node in the hierarchy as shown. Which load balancer types are denied in VPC A?

Your organization has recently migrated sensitive customer data to Cloud Storage buckets. For compliance reasons, you must ensure that all vendor data access and administrative access by Google personnel is logged. What should you do?

You work for a healthcare provider that is expanding into the cloud to store and process sensitive patient data. You must ensure the chosen Google Cloud configuration meets these strict regulatory requirements:

Data must reside within specific geographic regions.

Certain administrative actions on patient data require explicit approval from designated compliance officers.

Access to patient data must be auditable.

What should you do?

A customer is collaborating with another company to build an application on Compute Engine. The customer is building the application tier in their GCP Organization, and the other company is building the storage tier in a different GCP Organization. This is a 3-tier web application. Communication between portions of the application must not traverse the public internet by any means.

Which connectivity option should be implemented?

You are a Security Administrator at your organization. You need to restrict service account creation capability within production environments. You want to accomplish this centrally across the organization. What should you do?

You have noticed an increased number of phishing attacks across your enterprise user accounts. You want to implement the Google 2-Step Verification (2SV) option that uses a cryptographic signature to authenticate a user and verify the URL of the login page. Which Google 2SV option should you use?

A customer implements Cloud Identity-Aware Proxy for their ERP system hosted on Compute Engine. Their security team wants to add a security layer so that the ERP systems only accept traffic from Cloud Identity- Aware Proxy.

What should the customer do to meet these requirements?

Your organization uses Google Workspace Enterprise Edition tor authentication. You are concerned about employees leaving their laptops unattended for extended periods of time after authenticating into Google Cloud. You must prevent malicious people from using an employee ' s unattended laptop to modify their environment.

What should you do?

You are migrating an on-premises data warehouse to BigQuery Cloud SQL, and Cloud Storage. You need to configure security services in the data warehouse. Your company compliance policies mandate that the data warehouse must:

• Protect data at rest with full lifecycle management on cryptographic keys

• Implement a separate key management provider from data management

• Provide visibility into all encryption key requests

What services should be included in the data warehouse implementation?

Choose 2 answers

When working with agents in a support center via online chat, an organization’s customers often share pictures of their documents with personally identifiable information (PII). The organization that owns the support center is concerned that the PII is being stored in their databases as part of the regular chat logs they retain for

review by internal or external analysts for customer service trend analysis.

Which Google Cloud solution should the organization use to help resolve this concern for the customer while still maintaining data utility?

Your organization has established a highly sensitive project within a VPC Service Controls perimeter. You need to ensure that only users meeting specific contextual requirements such as having a company-managed device, a specific location, and a valid user identity can access resources within this perimeter. You want to evaluate the impact of this change without blocking legitimate access. What should you do?

Your organization hosts a financial services application running on Compute Engine instances for a third-party company. The third-party company’s servers that will consume the application also run on Compute Engine in a separate Google Cloud organization. You need to configure a secure network connection between the Compute Engine instances. You have the following requirements:

The network connection must be encrypted.

The communication between servers must be over private IP addresses.

What should you do?

Your organization operates in a highly regulated environment and has a stringent set of compliance requirements for protecting customer data. You must encrypt data while in use to meet regulations. What should you do?

After completing a security vulnerability assessment, you learned that cloud administrators leave Google Cloud CLI sessions open for days. You need to reduce the risk of attackers who might exploit these open sessions by setting these sessions to the minimum duration.

What should you do?

An organization is starting to move its infrastructure from its on-premises environment to Google Cloud Platform (GCP). The first step the organization wants to take is to migrate its current data backup and disaster recovery solutions to GCP for later analysis. The organization’s production environment will remain on- premises for an indefinite time. The organization wants a scalable and cost-efficient solution.

Which GCP solution should the organization use?

You have numerous private virtual machines on Google Cloud. You occasionally need to manage the servers through Secure Socket Shell (SSH) from a remote location. You want to configure remote access to the servers in a manner that optimizes security and cost efficiency.

What should you do?

You need to use Cloud External Key Manager to create an encryption key to encrypt specific BigQuery data at rest in Google Cloud. Which steps should you do first?

You are developing a new application that uses exclusively Compute Engine VMs Once a day. this application will execute five different batch jobs Each of the batch jobs requires a dedicated set of permissions on Google Cloud resources outside of your application. You need to design a secure access concept for the batch jobs that adheres to the least-privilege principle

What should you do?

You want to limit the images that can be used as the source for boot disks. These images will be stored in a dedicated project.

What should you do?

Your company has deployed an artificial intelligence model in a central project. This model has a lot of sensitive intellectual property and must be kept strictly isolated from the internet. You must expose the model endpoint only to a defined list of projects in your organization. What should you do?

You need to set up a Cloud interconnect connection between your company ' s on-premises data center and VPC host network. You want to make sure that on-premises applications can only access Google APIs over the Cloud Interconnect and not through the public internet. You are required to only use APIs that are supported by VPC Service Controls to mitigate against exfiltration risk to non-supported APIs. How should you configure the network?

Your team needs to prevent users from creating projects in the organization. Only the DevOps team should be allowed to create projects on behalf of the requester.

Which two tasks should your team perform to handle this request? (Choose two.)

You are part of a security team investigating a compromised service account key. You need to audit which new resources were created by the service account.

What should you do?

An organization is migrating from their current on-premises productivity software systems to G Suite. Some network security controls were in place that were mandated by a regulatory body in their region for their previous on-premises system. The organization’s risk team wants to ensure that network security controls are maintained and effective in G Suite. A security architect supporting this migration has been asked to ensure that network security controls are in place as part of the new shared responsibility model between the organization and Google Cloud.

What solution would help meet the requirements?

Your organization must follow the Payment Card Industry Data Security Standard (PCI DSS). To prepare for an audit, you must detect deviations at an infrastructure-as-a-service level in your Google Cloud landing zone. What should you do?

Last week, a company deployed a new App Engine application that writes logs to BigQuery. No other workloads are running in the project. You need to validate that all data written to BigQuery was done using the App Engine Default Service Account.

What should you do?

You work for a large organization that runs many custom training jobs on Vertex AI. A recent compliance audit identified a security concern. All jobs currently use the Vertex AI service agent. The audit mandates that each training job must be isolated, with access only to the required Cloud Storage buckets, following the principle of least privilege. You need to design a secure, scalable solution to enforce this requirement. What should you do?

Your financial services company is migrating its operations to Google Cloud. You are implementing a centralized logging strategy to meet strict regulatory compliance requirements. Your company ' s Google Cloud organization has a dedicated folder for all production projects. All audit logs, including Data Access logs from all current and future projects within this production folder, must be securely collected and stored in a central BigQuery dataset for long-term retention and analysis. To prevent duplicate log storage and to enforce centralized control, you need to implement a logging solution that intercepts and overrides any project-level log sinks for these audit logs, to ensure that logs are not inadvertently routed elsewhere. What should you do?

You are implementing data protection by design and in accordance with GDPR requirements. As part of design reviews, you are told that you need to manage the encryption key for a solution that includes workloads for Compute Engine, Google Kubernetes Engine, Cloud Storage, BigQuery, and Pub/Sub. Which option should you choose for this implementation?

You are working with a network engineer at your company who is extending a large BigQuery-based data analytics application. Currently, all of the data for that application is ingested from on-premises applications over a Dedicated Interconnect connection with a 20Gbps capacity. You need to onboard a data source on Microsoft Azure that requires a daily ingestion of approximately 250 TB of data. You need to ensure that the data gets transferred securely and efficiently. What should you do?

Your organization uses Google Workspace as the primary identity provider for Google Cloud Users in your organization initially created their passwords. You need to improve password security due to a recent security event. What should you do?

Your company is deploying a large number of containerized applications to GKE. The existing CI/CD pipeline uses Cloud Build to construct container images, transfers the images to Artifact Registry, and then deploys the images to GKE. You need to ensure that only images that have passed vulnerability scanning and meet specific corporate policies are allowed to be deployed. The process needs to be automated and integrated into the existing CI/CD pipeline. What should you do?

Your organization wants to protect its supply chain from attacks. You need to automatically scan your deployment pipeline for vulnerabilities and ensure only scanned and verified containers can be executed in your production environment. You want to minimize management overhead. What should you do?

You manage your organization ' s Security Operations Center (SOC). You currently monitor and detect network traffic anomalies in your Google Cloud VPCs based on packet header information. However, you want the capability to explore network flows and their payload to aid investigations. Which Google Cloud product should you use?

Your company is migrating a customer database that contains personally identifiable information (PII) to Google Cloud. To prevent accidental exposure, this data must be protected at rest. You need to ensure that all PII is automatically discovered and redacted, or pseudonymized, before any type of analysis. What should you do?

You are deploying a web application hosted on Compute Engine. A business requirement mandates that application logs are preserved for 12 years and data is kept within European boundaries. You want to implement a storage solution that minimizes overhead and is cost-effective. What should you do?

While migrating your organization’s infrastructure to GCP, a large number of users will need to access GCP Console. The Identity Management team already has a well-established way to manage your users and want to keep using your existing Active Directory or LDAP server along with the existing SSO password.

What should you do?

You are auditing all your Google Cloud resources in the production project. You want to identity all principals who can change firewall rules.

What should you do?

You work for a large organization where each business unit has thousands of users. You need to delegate management of access control permissions to each business unit. You have the following requirements:

Each business unit manages access controls for their own projects.

Each business unit manages access control permissions at scale.

Business units cannot access other business units ' projects.

Users lose their access if they move to a different business unit or leave the company.

Users and access control permissions are managed by the on-premises directory service.

What should you do? (Choose two.)

You need to implement an encryption at-rest strategy that reduces key management complexity for non-sensitive data and protects sensitive data while providing the flexibility of controlling the key residency and rotation schedule. FIPS 140-2 L1 compliance is required for all data types. What should you do?

Your financial services company needs to process customer personally identifiable information (PII) for analytics while adhering to strict privacy regulations. You must transform this data to protect individual privacy to ensure that the data retains its original format and consistency for analytical integrity. Your solution must avoid full irreversible deletion. What should you do?

A website design company recently migrated all customer sites to App Engine. Some sites are still in progress and should only be visible to customers and company employees from any location.

Which solution will restrict access to the in-progress sites?

Your company’s cloud security policy dictates that VM instances should not have an external IP address. You need to identify the Google Cloud service that will allow VM instances without external IP addresses to connect to the internet to update the VMs. Which service should you use?

A security audit uncovered several inconsistencies in your project’s Identity and Access Management (IAM) configuration. Some service accounts have overly permissive roles, and a few external collaborators have more access than necessary. You need to gain detailed visibility into changes to IAM policies, user activity, service account behavior, and access to sensitive projects. What should you do?

Your organization relies heavily on virtual machines (VMs) in Compute Engine. Due to team growth and resource demands. VM sprawl is becoming problematic. Maintaining consistent security hardening and timely package updates poses an increasing challenge. You need to centralize VM image management and automate the enforcement of security baselines throughout the virtual machine lifecycle. What should you do?

Your organization is using Vertex AI Workbench Instances. You must ensure that newly deployed instances are automatically kept up-to-date and that users cannot accidentally alter settings in the operating system. What should you do?

You have an application where the frontend is deployed on a managed instance group in subnet A and the data layer is stored on a mysql Compute Engine virtual machine (VM) in subnet B on the same VPC. Subnet A and Subnet B hold several other Compute Engine VMs. You only want to allow thee application frontend to access the data in the application ' s mysql instance on port 3306.

What should you do?

You are setting up a CI/CD pipeline to deploy containerized applications to your production clusters on Google Kubernetes Engine (GKE). You need to prevent containers with known vulnerabilities from being deployed. You have the following requirements for your solution:

Must be cloud-native

Must be cost-efficient

Minimize operational overhead

How should you accomplish this? (Choose two.)

An organization ' s security and risk management teams are concerned about where their responsibility lies for certain production workloads they are running in Google Cloud Platform (GCP), and where Google ' s responsibility lies. They are mostly running workloads using Google Cloud ' s Platform-as-a-Service (PaaS) offerings, including App Engine primarily.

Which one of these areas in the technology stack would they need to focus on as their primary responsibility when using App Engine?

You have just created a new log bucket to replace the _Default log bucket. You want to route all log entries that are currently routed to the _Default log bucket to this new log bucket in the most efficient manner. What should you do?

You are responsible for the operation of your company ' s application that runs on Google Cloud. The database for the application will be maintained by an external partner. You need to give the partner team access to the database. This access must be restricted solely to the database and can not extend to any other resources within your company ' s network. Your solution should follow Google-recommended practices. What should you do?

The security operations team needs access to the security-related logs for all projects in their organization. They have the following requirements:

Follow the least privilege model by having only view access to logs.

Have access to Admin Activity logs.

Have access to Data Access logs.

Have access to Access Transparency logs.

Which Identity and Access Management (IAM) role should the security operations team be granted?

You want to prevent users from accidentally deleting a Shared VPC host project. Which organization-level policy constraint should you enable?

Which international compliance standard provides guidelines for information security controls applicable to the provision and use of cloud services?

Your organization is implementing a Zero Trust security model and using Chrome Enterprise Premium. The company is interested in governing access to sensitive data stored in Cloud Storage. You need to configure access controls that ensure only authorized users on managed devices can access this data, regardless of their network location. Access should be restricted based on the device ' s security posture. This requires up-to-date operating system patches and antivirus software. What should you do?

You are implementing communications restrictions for specific services in your Google Cloud organization. Your data analytics team works in a dedicated folder You need to ensure that access to BigQuery is controlled for that folder and its projects. The data analytics team must be able to control the restrictions only at the folder level What should you do?

In an effort for your company messaging app to comply with FIPS 140-2, a decision was made to use GCP compute and network services. The messaging app architecture includes a Managed Instance Group (MIG) that controls a cluster of Compute Engine instances. The instances use Local SSDs for data caching and UDP for instance-to-instance communications. The app development team is willing to make any changes necessary to comply with the standard

Which options should you recommend to meet the requirements?

A customer deploys an application to App Engine and needs to check for Open Web Application Security Project (OWASP) vulnerabilities.

Which service should be used to accomplish this?

A company has been running their application on Compute Engine. A bug in the application allowed a malicious user to repeatedly execute a script that results in the Compute Engine instance crashing. Although the bug has been fixed, you want to get notified in case this hack re-occurs.

What should you do?

Your organization needs to restrict the types of Google Cloud services that can be deployed within specific folders to enforce compliance requirements. You must apply these restrictions only to the designated folders without affecting other parts of the resource hierarchy. You want to use the most efficient and simple method. What should you do?

Your team needs to make sure that a Compute Engine instance does not have access to the internet or to any Google APIs or services.

Which two settings must remain disabled to meet these requirements? (Choose two.)

You are a consultant for an organization that is considering migrating their data from its private cloud to Google Cloud. The organization’s compliance team is not familiar with Google Cloud and needs guidance on how compliance requirements will be met on Google Cloud. One specific compliance requirement is for customer data at rest to reside within specific geographic boundaries. Which option should you recommend for the organization to meet their data residency requirements on Google Cloud?

You want data on Compute Engine disks to be encrypted at rest with keys managed by Cloud Key Management Service (KMS). Cloud Identity and Access Management (IAM) permissions to these keys must be managed in a grouped way because the permissions should be the same for all keys.

What should you do?

Your application is deployed as a highly available cross-region solution behind a global external HTTP(S) load balancer. You notice significant spikes in traffic from multiple IP addresses but it is unknown whether the IPs are malicious. You are concerned about your application ' s availability. You want to limit traffic from these clients over a specified time interval.

What should you do?

You plan to deploy your cloud infrastructure using a CI/CD cluster hosted on Compute Engine. You want to minimize the risk of its credentials being stolen by a third party. What should you do?