An administrator has just installed the OpenShift cluster as the first step of installing Cloud Pak for Integration.

What is an indication of successful completion of the OpenShift Cluster installation, prior to any other cluster operation?

The command " which oc " shows that the OpenShift Command Line Interface(oc) is successfully installed.

The duster credentials are included at the end of the /.openshifl_install.log file.

The command " oc get nodes " returns the list of nodes in the cluster.

The OpenShift Admin console can be opened with the default user and will display the cluster statistics.

After successfully installing an OpenShift cluster , the most reliable way to confirm that the cluster is up and running is by checking the status of its nodes. This is done using the oc get nodes command.

The command oc get nodes lists all the nodes in the cluster and their current status.

If the installation is successful, the nodes should be in a " Ready " state , indicating that the cluster is functional and prepared for further configuration, including the installation of IBM Cloud Pak for Integration (CP4I) .

Option A (Incorrect – which oc ) : This only verifies that the OpenShift CLI ( oc ) is installed on the local system, but it does not confirm the cluster installation.

Option B (Incorrect – Checking /.openshift_install.log ) : While the installation log may indicate a successful install, it does not confirm the operational status of the cluster.

Option C (Correct – oc get nodes ) : This command confirms that the cluster is running and provides a status check on all nodes. If the nodes are listed and marked as " Ready " , it indicates that the OpenShift cluster is successfully installed.

Option D (Incorrect – OpenShift Admin Console Access) : While the OpenShift Web Console can be accessed if the cluster is installed, this does not guarantee that the cluster is fully operational. The most definitive check is through the oc get nodes command.

Analysis of the Options:

IBM Cloud Pak for Integration Installation Guide

Red Hat OpenShift Documentation – Cluster Installation

Verifying OpenShift Cluster Readiness ( oc get nodes )

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

When Instantiating a new capability through the Platform Navigator, what must be done to see distributed tracing data?

Press the ' enable ' button In the Operations Dashboard.

Add ' operationsDashboard: true ' to the deployment YAML.

Run the oc register command against the capability.

Register the capability with the Operations Dashboard

In IBM Cloud Pak for Integration (CP4I) v2021.2 , when instantiating a new capability via the Platform Navigator , distributed tracing data is not automatically available . To enable tracing and observability for a capability, it must be registered with the Operations Dashboard .

The Operations Dashboard in CP4I provides centralized observability, logging, and distributed tracing across integration components.

Capabilities such as IBM API Connect, App Connect, IBM MQ, and Event Streams need to be explicitly registered with the Operations Dashboard to collect and display tracing data.

Registration links the capability with the distributed tracing service, allowing telemetry data to be captured.

Why " Register the capability with the Operations Dashboard " is the correct answer?

Why the Other Options Are Incorrect? Option

Explanation

Correct?

A. Press the ' enable ' button in the Operations Dashboard.

❌ Incorrect – There is no single ' enable ' button that automatically registers capabilities. Manual registration is required.

❌

B. Add ' operationsDashboard: true ' to the deployment YAML.

❌ Incorrect – This setting alone does not enable distributed tracing. The capability still needs to be registered with the Operations Dashboard.

❌

C. Run the oc register command against the capability.

❌ Incorrect – There is no oc register command in OpenShift or CP4I for registering capabilities with the Operations Dashboard.

❌

Final Answer: ✅ D. Register the capability with the Operations Dashboard

IBM Cloud Pak for Integration - Operations Dashboard

Enabling Distributed Tracing in IBM CP4I

IBM CP4I - Observability and Monitoring

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

What is the minimum number of Elasticsearch nodes required for a highly-available logging solution?

1

2

3

7

In IBM Cloud Pak for Integration (CP4I) v2021.2 , which runs on Red Hat OpenShift , logging is handled using the OpenShift Logging Operator , which often utilizes Elasticsearch as the log storage backend.

For a highly available (HA) Elasticsearch cluster , the minimum number of nodes required is 3 .

Elasticsearch uses a quorum-based system for cluster state management.

A minimum of three nodes ensures that the cluster can maintain a quorum in case one node fails.

HA requires at least two master-eligible nodes , and with three nodes , the system can elect a new master if the active one fails.

Replication across three nodes prevents data loss and improves fault tolerance.

Why Are 3 Elasticsearch Nodes Required for High Availability? Example Elasticsearch Deployment for HA: A standard HA Elasticsearch setup consists of:

3 master-eligible nodes (manage cluster state).

At least 2 data nodes (store logs and allow redundancy).

Optional client nodes (handle queries to offload work from data nodes).

Ensures HA by allowing Elasticsearch to withstand node failures without loss of cluster control.

Prevents split-brain scenarios , which occur when an even number of nodes (e.g., 2) cannot reach a quorum.

Recommended by IBM and Red Hat for OpenShift logging solutions.

Why Answer C (3) is Correct?

A. 1 → Incorrect

A single-node Elasticsearch deployment is not HA because if the node fails, all logs are lost.

B. 2 → Incorrect

Two nodes cannot form a quorum , meaning the cluster cannot elect a leader reliably.

This could lead to split-brain scenarios or a complete failure when one node goes down.

D. 7 → Incorrect

While a larger cluster (e.g., 7 nodes) improves scalability and performance, it is not the minimum requirement for HA.

Three nodes are sufficient for high availability .

Explanation of Incorrect Answers:

IBM Cloud Pak for Integration Logging and Monitoring

OpenShift Logging Operator - Elasticsearch Deployment

Elasticsearch High Availability Best Practices

IBM OpenShift Logging Solution Architecture

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

Which option should an administrator choose if they need to run Cloud Pak for Integration (CP4I) on AWS but do not want to have to manage the OpenShift layer themselves?

Deploy CP4I onto AWS ROSA.

Use Inslaller-provisioned-lnfrastructure to deploy OCP and CP4I onto EC2.

Use the " CP4I Quick Start on AWS " to deploy.

Using the Terraform scripts for provisioning CP4I and OpenShift which are available on IBM ' s Github.

When deploying IBM Cloud Pak for Integration (CP4I) v2021.2 on AWS, an administrator has multiple options for managing the OpenShift layer. However, if the goal is to avoid managing OpenShift manually , the best approach is to deploy CP4I onto AWS ROSA (Red Hat OpenShift Service on AWS) .

Managed OpenShift : ROSA is a fully managed OpenShift service, meaning AWS and Red Hat handle the deployment, updates, patching, and infrastructure maintenance of OpenShift.

Simplified Deployment : Administrators can directly deploy CP4I on ROSA without worrying about installing and maintaining OpenShift on AWS manually.

IBM Support : IBM Cloud Pak solutions, including CP4I, are certified to run on ROSA, ensuring compatibility and optimized performance.

Integration with AWS Services : ROSA allows seamless integration with AWS-native services like S3, RDS, and IAM for authentication and storage.

Why is AWS ROSA the Best Choice?

B. Installer-provisioned Infrastructure on EC2 – This requires manual setup of OpenShift on AWS EC2 instances, increasing operational overhead.

C. CP4I Quick Start on AWS – IBM provides a Quick Start guide for deploying CP4I, but it assumes you are managing OpenShift yourself. This does not eliminate OpenShift management.

D. Terraform scripts from IBM’s GitHub – These scripts help automate provisioning but still require the administrator to manage OpenShift themselves.

Why Not the Other Options? Thus, for a fully managed OpenShift solution on AWS , AWS ROSA is the best option .

IBM Cloud Pak for Integration Documentation

IBM Cloud Pak for Integration on AWS ROSA

Deploying Cloud Pak for Integration on AWS

Red Hat OpenShift Service on AWS (ROSA) Overview

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

What role is required to install OpenShift GitOps?

cluster-operator

cluster-admin

admin

operator

In Red Hat OpenShift , installing OpenShift GitOps (based on ArgoCD ) requires elevated cluster-wide permissions because the installation process:

Deploys Custom Resource Definitions (CRDs) .

Creates Operators and associated resources.

Modifies cluster-scoped components like role-based access control (RBAC) policies.

Only a user with cluster-admin privileges can perform these actions, making cluster-admin the correct role for installing OpenShift GitOps.

Command to Install OpenShift GitOps: oc apply -f openshift-gitops-subscription.yaml

This operation requires cluster-wide permissions , which only the cluster-admin role provides.

Why the Other Options Are Incorrect? Option

Explanation

Correct?

A. cluster-operator

❌ Incorrect – No such default role exists in OpenShift. Operators are managed within namespaces but cannot install GitOps at the cluster level .

❌

C. admin

❌ Incorrect – The admin role provides namespace-level permissions, but GitOps requires cluster-wide access to install Operators and CRDs.

❌

D. operator

❌ Incorrect – This is not a valid OpenShift role. Operators are software components managed by OpenShift, but an operator role does not exist for installation purposes.

❌

Final Answer: ✅ B. cluster-admin

Red Hat OpenShift GitOps Installation Guide

Red Hat OpenShift RBAC Roles and Permissions

IBM Cloud Pak for Integration - OpenShift GitOps Best Practices

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

Which two Red Hat OpenShift Operators should be installed to enable OpenShift Logging?

OpenShift Console Operator

OpenShift Logging Operator

OpenShift Log Collector

OpenShift Centralized Logging Operator

OpenShift Elasticsearch Operator

In IBM Cloud Pak for Integration (CP4I) v2021.2 , which runs on Red Hat OpenShift , logging is a critical component for monitoring cluster and application activities. To enable OpenShift Logging , two key operators must be installed:

OpenShift Logging Operator ( B )

This operator is responsible for managing the logging stack in OpenShift.

It helps configure and deploy logging components like Fluentd, Kibana, and Elasticsearch within the OpenShift cluster.

It provides a unified way to collect and visualize logs across different workloads.

OpenShift Elasticsearch Operator ( E )

This operator manages the Elasticsearch cluster , which is the central data store for log aggregation in OpenShift.

Elasticsearch stores logs collected from cluster nodes and applications, making them searchable and analyzable via Kibana.

Without this operator, OpenShift Logging cannot function, as it depends on Elasticsearch for log storage.

A. OpenShift Console Operator → Incorrect

The OpenShift Console Operator manages the web UI of OpenShift but has no role in logging .

It does not collect, store, or manage logs.

C. OpenShift Log Collector → Incorrect

There is no official OpenShift component or operator named " OpenShift Log Collector. "

Log collection is handled by Fluentd , which is managed by the OpenShift Logging Operator .

D. OpenShift Centralized Logging Operator → Incorrect

This is not a valid OpenShift operator .

The correct operator for centralized logging is OpenShift Logging Operator .

Explanation of Incorrect Answers:

OpenShift Logging Overview

OpenShift Logging Operator Documentation

OpenShift Elasticsearch Operator Documentation

IBM Cloud Pak for Integration Logging Configuration

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

When using the Platform Navigator, what permission is required to add users and user groups?

root

Super-user

Administrator

User

In IBM Cloud Pak for Integration (CP4I) v2021.2 , the Platform Navigator is the central UI for managing integration capabilities, including user and access control. To add users and user groups , the required permission level is Administrator .

User Management Capabilities:

The Administrator role in Platform Navigator has full access to user and group management functions , including:

Adding new users

Assigning roles

Managing access policies

RBAC (Role-Based Access Control) Enforcement:

CP4I enforces RBAC to restrict actions based on roles.

Only Administrators can modify user access, ensuring security compliance.

Access Control via OpenShift and IAM Integration:

User management in CP4I integrates with IBM Cloud IAM or OpenShift User Management .

The Administrator role ensures correct permissions for authentication and authorization .

Why is " Administrator " the Correct Answer?

Why Not the Other Options? Option

Reason for Exclusion

A. root

" root " is a Linux system user and not a role in Platform Navigator . CP4I does not grant UI-based root access.

B. Super-user

No predefined " Super-user " role exists in CP4I. If referring to an elevated user, it still does not match the Administrator role in Platform Navigator.

D. User

Regular " User " roles have view-only or limited permissions and cannot manage users or groups.

Thus, the Administrator role is the correct choice for adding users and user groups in Platform Navigator .

IBM Cloud Pak for Integration - Platform Navigator Overview

Managing Users in Platform Navigator

Role-Based Access Control in CP4I

OpenShift User Management and Authentication

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

What team Is created as part of the Initial Installation ot Cloud Pak for In-tegration?

zen followed by a timestamp.

zen followed by a GUID.

zenteam followed by a timestamp.

zenteam followed by a GUID.

During the initial installation of IBM Cloud Pak for Integration (CP4I) v2021.2 , a default team is automatically created to manage access control and user roles within the system. This team is named " zenteam " , followed by a Globally Unique Identifier (GUID) .

" zenteam " is the default team created as part of CP4I’s initial installation.

A GUID (Globally Unique Identifier) is appended to " zenteam " to ensure uniqueness across different installations.

This team is crucial for user and role management , as it provides access to various components of CP4I such as API management, messaging, and event streams.

The GUID ensures that multiple deployments within the same cluster do not conflict in terms of team naming.

IBM Cloud Pak for Integration Documentation

IBM Knowledge Center - User and Access Management

IBM CP4I Installation Guide

Key Points: IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

When using IBM Cloud Pak for Integration and deploying the DataPower Gateway service, which statement is true?

Only the datapower-cp4i image can be deployed.

A selected list of add-on modules can be enabled on DataPower Gateway.

This image deployment brings all the functionality of DataPower as it runs with root permissions.

The datapower-cp4i image will be downloaded from the dockerhub enterprise account of IBM.

When deploying IBM DataPower Gateway as part of IBM Cloud Pak for Integration (CP4I) v2021.2 , administrators can enable a selected list of add-on modules based on their requirements. This allows customization and optimization of the deployment by enabling only the necessary features.

IBM DataPower Gateway deployed in Cloud Pak for Integration is a containerized version that supports modular configurations .

Administrators can enable or disable add-on modules to optimize resource utilization and security.

Some of these modules include:

API Gateway

XML Processing

MQ Connectivity

Security Policies

Why Option B is Correct: This flexibility helps in reducing overhead and ensuring that only the necessary capabilities are deployed.

A. Only the datapower-cp4i image can be deployed. → Incorrect

While datapower-cp4i is the primary image used within Cloud Pak for Integration, other variations of DataPower can also be deployed outside CP4I (e.g., standalone DataPower Gateway).

C. This image deployment brings all the functionality of DataPower as it runs with root permissions. → Incorrect

The DataPower container runs as a non-root user for security reasons.

Not all functionalities available in the bare-metal or VM-based DataPower appliance are enabled by default in the containerized version.

D. The datapower-cp4i image will be downloaded from the dockerhub enterprise account of IBM. → Incorrect

IBM does not use DockerHub for distributing CP4I container images.

Instead, DataPower images are pulled from the IBM Entitled Registry ( cp.icr.io ) , which requires an IBM Entitlement Key for access.

Explanation of Incorrect Answers:

IBM Cloud Pak for Integration - Deploying DataPower Gateway

IBM DataPower Gateway Container Deployment Guide

IBM Entitled Registry - Pulling CP4I Images

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

Which statement is true about the Authentication URL user registry in API Connect?

It authenticates Developer Portal sites.

It authenticates users defined in a provider organization.

It authenticates Cloud Manager users.

It authenticates users by referencing a custom identity provider.

In IBM API Connect , an Authentication URL user registry is a type of user registry that allows authentication by delegating user verification to an external identity provider . This is typically used when API Connect needs to integrate with custom authentication mechanisms , such as OAuth, OpenID Connect, or SAML-based identity providers.

When configured, API Connect does not store user credentials locally. Instead, it redirects authentication requests to the specified external authentication URL , and if the response is valid, the user is authenticated.

The Authentication URL user registry is specifically designed to reference an external custom identity provider .

This enables API Connect to integrate with external authentication systems like LDAP, Active Directory, OAuth, and OpenID Connect .

It is commonly used for single sign-on (SSO) and enterprise authentication strategies .

Why Answer D is Correct:

A. It authenticates Developer Portal sites. → Incorrect

The Developer Portal uses its own authentication mechanisms, such as LDAP, local user registries, and external identity providers , but the Authentication URL user registry does not authenticate Developer Portal users directly .

B. It authenticates users defined in a provider organization. → Incorrect

Users in a provider organization (such as API providers and administrators) are typically authenticated using Cloud Manager or an LDAP-based user registry , not via an Authentication URL user registry .

C. It authenticates Cloud Manager users. → Incorrect

Cloud Manager users are typically authenticated via LDAP or API Connect’s built-in user registry .

The Authentication URL user registry is not responsible for Cloud Manager authentication .

Explanation of Incorrect Answers:

IBM API Connect User Registry Types

IBM API Connect Authentication and User Management

IBM Cloud Pak for Integration Documentation

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

https://www.ibm.com/docs/SSMNED_v10/com.ibm.apic.cmc.doc/capic_cmc_registries_concepts.html

An administrator is checking that all components and software in their estate are licensed. They have only purchased Cloud Pak for Integration (CP41) li-censes.

How are the OpenShift master nodes licensed?

CP41 licenses include entitlement for the entire OpenShift cluster that they run on, and the administrator can count against the master nodes.

OpenShift master nodes do not consume OpenShift license entitlement, so no license is needed.

The administrator will need to purchase additional OpenShift licenses to cover the master nodes.

CP41 licenses include entitlement for 3 cores of OpenShift per core of CP41.

In IBM Cloud Pak for Integration (CP4I) v2021.2 , licensing is based on Virtual Processor Cores (VPCs) , and it includes entitlement for OpenShift usage . However, OpenShift master nodes (control plane nodes) do not consume license entitlement , because:

OpenShift licensing only applies to worker nodes.

The master nodes (control plane nodes) manage cluster operations and scheduling, but they do not run user workloads.

IBM’s Cloud Pak licensing model considers only the worker nodes for licensing purposes.

Master nodes are essential infrastructure and are excluded from entitlement calculations.

IBM and Red Hat do not charge for OpenShift master nodes in Cloud Pak deployments.

A. CP4I licenses include entitlement for the entire OpenShift cluster that they run on, and the administrator can count against the master nodes. → ❌ Incorrect

CP4I licenses do cover OpenShift , but only for worker nodes where workloads are deployed.

Master nodes are excluded from licensing calculations.

C. The administrator will need to purchase additional OpenShift licenses to cover the master nodes. → ❌ Incorrect

No additional OpenShift licenses are required for master nodes.

OpenShift licensing only applies to worker nodes that run applications.

D. CP4I licenses include entitlement for 3 cores of OpenShift per core of CP4I. → ❌ Incorrect

The standard IBM Cloud Pak licensing model provides 1 VPC of OpenShift for 1 VPC of CP4I , not a 3:1 ratio .

Additionally, this applies only to worker nodes , not master nodes.

Explanation of Incorrect Answers:

IBM Cloud Pak Licensing Guide

IBM Cloud Pak for Integration Licensing Details

Red Hat OpenShift Licensing Guide

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

What ate the two possible options to upgrade Common Services from the Extended Update Support (EUS) version (3.6.x) to the continuous delivery versions (3.7.x or later)?

Click the Update button on the Details page of the common-services operand.

Select the Update Common Services option from the Cloud Pak Administration Hub console.

Use the OpenShift web console to change the operator channel from stable-v1 to v3.

Run the script provided by IBM using links available in the documentation.

Click the Update button on the Details page of the IBM Cloud Pak Founda-tional Services operator.

IBM Cloud Pak for Integration (CP4I) v2021.2 relies on IBM Cloud Pak Foundational Services, which was previously known as IBM Common Services. Upgrading from the Extended Update Support (EUS) version (3.6.x) to a continuous delivery version (3.7.x or later) requires following IBM ' s recommended upgrade paths. The two valid options are:

Using IBM ' s provided script (Option D):

IBM provides a script specifically designed to upgrade Cloud Pak Foundational Services from an EUS version to a later continuous delivery (CD) version.

This script automates the necessary upgrade steps and ensures dependencies are properly handled.

IBM ' s official documentation includes the script download links and usage instructions.

Using the IBM Cloud Pak Foundational Services operator update button (Option E):

The IBM Cloud Pak Foundational Services operator in the OpenShift web console provides an update button that allows administrators to upgrade services.

This method is recommended by IBM for in-place upgrades, ensuring minimal disruption while moving from 3.6.x to a later version.

The upgrade process includes rolling updates to maintain high availability.

Option A (Click the Update button on the Details page of the common-services operand):

There is no direct update button at the operand level that facilitates the entire upgrade from EUS to CD versions.

The upgrade needs to be performed at the operator level, not just at the operand level.

Option B (Select the Update Common Services option from the Cloud Pak Administration Hub console):

The Cloud Pak Administration Hub does not provide a direct update option for Common Services.

Updates are handled via OpenShift or IBM’s provided scripts.

Option C (Use the OpenShift web console to change the operator channel from stable-v1 to v3):

Simply changing the operator channel does not automatically upgrade from an EUS version to a continuous delivery version.

IBM requires following specific upgrade steps, including running a script or using the update button in the operator.

Incorrect Options and Justification:

IBM Cloud Pak Foundational Services Upgrade Documentation:

IBM Official Documentation

IBM Cloud Pak for Integration v2021.2 Knowledge Center

IBM Redbooks and Technical Articles on CP4I Administration

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

An administrator has configured OpenShift Container Platform (OCP) log forwarding to external third-party systems. What is expected behavior when the external logging aggregator becomes unavailable and the collected logs buffer size has been completely filled?

OCP rotates the logs and deletes them.

OCP store the logs in a temporary PVC.

OCP extends the buffer size and resumes logs collection.

The Fluentd daemon is forced to stop.

In IBM Cloud Pak for Integration (CP4I) v2021.2 , which runs on OpenShift Container Platform (OCP) , administrators can configure log forwarding to an external log aggregator (e.g., Elasticsearch, Splunk, or Loki ).

OCP uses Fluentd as the log collector, and when log forwarding fails due to the external logging aggregator becoming unavailable , the following happens:

Fluentd buffers the logs in memory (up to a defined limit).

If the buffer reaches its maximum size , OCP follows its default log management policy:

Older logs are rotated and deleted to make space for new logs.

This prevents excessive storage consumption on the OpenShift cluster.

This behavior ensures that the logging system does not stop functioning but rather manages storage efficiently by deleting older logs once the buffer is full.

Log rotation is a default behavior in OCP when storage limits are reached.

If logs cannot be forwarded and the buffer is full , OCP deletes old logs to continue operations.

This is a standard logging mechanism to prevent resource exhaustion.

Why Answer A is Correct?

B. OCP stores the logs in a temporary PVC. → Incorrect

OCP does not automatically store logs in a Persistent Volume Claim (PVC) .

Logs are buffered in memory and not redirected to PVC storage unless explicitly configured.

C. OCP extends the buffer size and resumes log collection. → Incorrect

The buffer size is fixed and does not dynamically expand .

Instead of increasing the buffer, older logs are rotated out when the limit is reached.

D. The Fluentd daemon is forced to stop. → Incorrect

Fluentd does not stop when the external log aggregator is down.

It continues collecting logs, buffering them until the limit is reached, and then follows log rotation policies.

Explanation of Incorrect Answers:

IBM Cloud Pak for Integration Logging and Monitoring

OpenShift Logging Overview

Fluentd Log Forwarding in OpenShift

OpenShift Log Rotation and Retention Policy

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

What is the effect of creating a second medium size profile?

The first profile will be replaced by the second profile.

The second profile will be configured with a medium size.

The first profile will be re-configured with a medium size.

The second profile will be configured with a large size.

In IBM Cloud Pak for Integration (CP4I) v2021.2 , profiles define the resource allocation and configuration settings for deployed services. When creating a second medium-size profile , the system will allocate the resources according to the medium-size specifications, without affecting the first profile .

IBM Cloud Pak for Integration supports multiple profiles , each with its own resource allocation.

When a second medium-size profile is created, it is independently assigned the medium-size configuration without modifying the existing profiles.

This allows multiple services to run with similar resource constraints but remain separately managed .

Why Option B is Correct:

A. The first profile will be replaced by the second profile. → ❌ Incorrect

Creating a new profile does not replace an existing profile ; each profile is independent.

C. The first profile will be re-configured with a medium size. → ❌ Incorrect

The first profile remains unchanged . A second profile does not modify or reconfigure an existing one.

D. The second profile will be configured with a large size. → ❌ Incorrect

The second profile will retain the specified medium size and will not be automatically upgraded to a large size.

Explanation of Incorrect Answers:

IBM Cloud Pak for Integration Sizing and Profiles

Managing Profiles in IBM Cloud Pak for Integration

OpenShift Resource Allocation for CP4I

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

Select all that apply

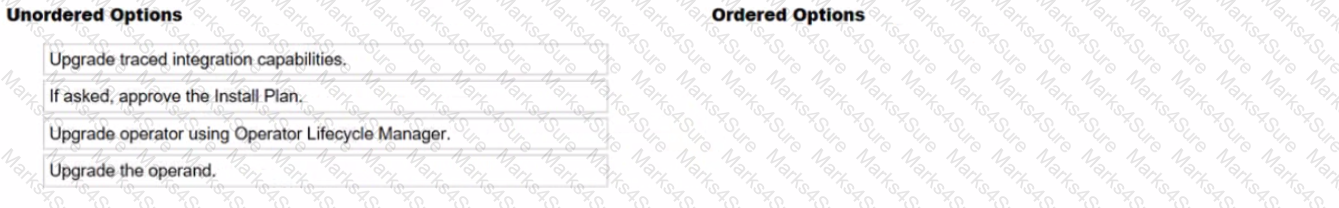

What is the correct order of the Operations Dashboard upgrade?

Upgrading the operator

If asked, approve the install plan

Upgrading the operand

Upgrading the traced integration capabilities

1️ ⃣ Upgrade operator using Operator Lifecycle Manager.

The Operator Lifecycle Manager (OLM) manages the upgrade of the Operations Dashboard operator in OpenShift.

This ensures that the latest version is available for managing operands.

2️ ⃣ If asked, approve the Install Plan.

Some installations require manual approval of the Install Plan to proceed with the operator upgrade.

If configured for automatic updates, this step may not be required.

3️ ⃣ Upgrade the operand.

Once the operator is upgraded, the operand (Operations Dashboard instance) needs to be updated to the latest version.

This step ensures that the upgraded operator manages the most recent operand version.

4️ ⃣ Upgrade traced integration capabilities.

Finally, upgrade any traced integration capabilities that depend on the Operations Dashboard.

This step ensures compatibility and full functionality with the updated components.

In IBM Cloud Pak for Integration (CP4I) v2021.2 , the Operations Dashboard provides tracing and monitoring for integration capabilities. The correct upgrade sequence ensures a smooth transition with minimal downtime:

Upgrade the Operator using OLM – The Operator manages operands and must be upgraded first.

Approve the Install Plan (if required) – Some operator updates require manual approval before proceeding.

Upgrade the Operand – The actual Operations Dashboard component is upgraded after the operator.

Upgrade Traced Integration Capabilities – Ensures all monitored services are compatible with the new Operations Dashboard version.

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

Upgrading Operators using Operator Lifecycle Manager (OLM)

IBM Cloud Pak for Integration Operations Dashboard

Best Practices for Upgrading CP4I Components

An administrator is using the Storage Suite for Cloud Paks entitlement that they received with their Cloud Pak for Integration (CP4I) licenses. The administrator has 200 VPC of CP4I and wants to be licensed to use 8TB of OpenShift Container Storage for 3 years. They have not used or allocated any of their Storage Suite entitlement so far.

What actions must be taken with their Storage Suite entitlement?

The Storage Suite entitlement covers the administrator ' s license needs only if the OpenShift cluster is running on IBM Cloud or AWS.

The Storage Suite entitlement can be used for OCS. however 8TB will require 320 VPCs of CP41

The Storage Suite entitlement already covers the administrator ' s license needs.

The Storage Suite entitlement only covers IBM Spectrum Scale, Spectrum Virtualize. Spectrum Discover, and Spectrum Protect Plus products, but the licenses can be converted to OCS.

The IBM Storage Suite for Cloud Paks provides storage licensing for various IBM Cloud Pak solutions, including Cloud Pak for Integration (CP4I) . It supports multiple storage options, such as IBM Spectrum Scale, IBM Spectrum Virtualize, IBM Spectrum Discover, IBM Spectrum Protect Plus, and OpenShift Container Storage (OCS) .

IBM licenses CP4I based on Virtual Processor Cores (VPCs) .

Storage Suite for Cloud Paks uses a conversion factor :

1 VPC of CP4I provides 25GB of OCS storage entitlement.

To calculate how much CP4I VPC is required for 8TB (8000GB) of OCS:

Understanding Licensing Conversion: 8000GB25GB per VPC=320 VPCs\frac{8000GB}{25GB \text{ per VPC}} = 320 \text{ VPCs} 25GB per VPC8000GB = 320 VPCs

Since the administrator only has 200 VPCs of CP4I , they do not have enough entitlement to cover the full 8TB of OCS storage. They would need an additional 120 VPCs to fully meet the requirement.

A. The Storage Suite entitlement covers the administrator ' s license needs only if the OpenShift cluster is running on IBM Cloud or AWS.

Incorrect, because Storage Suite for Cloud Paks can be used on any OpenShift deployment , including on-premises, IBM Cloud, AWS, or other cloud providers.

C. The Storage Suite entitlement already covers the administrator ' s license needs.

Incorrect, because 200 VPCs of CP4I only provide 5TB (200 × 25GB) of OCS storage, but the administrator needs 8TB .

D. The Storage Suite entitlement only covers IBM Spectrum products, but the licenses can be converted to OCS.

Incorrect, because Storage Suite already includes OpenShift Container Storage (OCS) as part of its licensing model without requiring any conversion.

Why Other Options Are Incorrect: IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

IBM Storage Suite for Cloud Paks Licensing Guide

IBM Cloud Pak for Integration Licensing Information

OpenShift Container Storage Entitlement

Which storage type is supported with the App Connect Enterprise (ACE) Dash-board instance?

Ephemeral storage

Flash storage

File storage

Raw block storage

In IBM Cloud Pak for Integration (CP4I) v2021.2 , App Connect Enterprise (ACE) Dashboard requires persistent storage to maintain configurations, logs, and runtime data. The supported storage type for the ACE Dashboard instance is file storage because:

It supports ReadWriteMany (RWX) access mode , allowing multiple pods to access shared data.

It ensures data persistence across restarts and upgrades, which is essential for managing ACE integrations.

It is compatible with NFS, IBM Spectrum Scale, and OpenShift Container Storage (OCS) , all of which provide file system-based storage .

A. Ephemeral storage – Incorrect

Ephemeral storage is temporary and data is lost when the pod restarts or gets rescheduled.

ACE Dashboard needs persistent storage to retain configuration and logs.

B. Flash storage – Incorrect

Flash storage refers to SSD-based storage and is not specifically required for the ACE Dashboard.

While flash storage can be used for better performance, ACE requires file-based persistence , which is different from flash storage.

D. Raw block storage – Incorrect

Block storage is low-level storage that is used for databases and applications requiring high-performance IOPS.

ACE Dashboard needs a shared file system , which block storage does not provide.

Why the other options are incorrect:

IBM App Connect Enterprise (ACE) Storage Requirements

IBM Cloud Pak for Integration Persistent Storage Guide

OpenShift Persistent Volume Types

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

Which command shows the current cluster version and available updates?

update

adm upgrade

adm update

upgrade

In IBM Cloud Pak for Integration (CP4I) v2021.2 , which runs on OpenShift , administrators often need to check the current cluster version and available updates before performing an upgrade.

The correct command to display the current OpenShift cluster version and check for available updates is:

oc adm upgrade

This command provides information about:

The current OpenShift cluster version .

Whether a newer version is available for upgrade.

The channel and upgrade path .

A. update – Incorrect

There is no oc update or update command in OpenShift CLI for checking cluster versions.

C. adm update – Incorrect

oc adm update is not a valid command in OpenShift. The correct subcommand is adm upgrade .

D. upgrade – Incorrect

oc upgrade is not a valid OpenShift CLI command. The correct syntax requires adm upgrade .

Why the other options are incorrect:

Example Output of oc adm upgrade : $ oc adm upgrade

Cluster version is 4.10.16

Updates available:

Version 4.11.0

Version 4.11.1

OpenShift Cluster Upgrade Documentation

IBM Cloud Pak for Integration OpenShift Upgrade Guide

Red Hat OpenShift CLI Reference

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

The monitoring component of Cloud Pak for Integration is built on which two tools?

Jaeger

Prometheus

Grafana

Logstash

Kibana

The monitoring component of IBM Cloud Pak for Integration (CP4I) v2021.2 is built on Prometheus and Grafana . These tools are widely used for monitoring and visualization in Kubernetes-based environments like OpenShift.

Prometheus – A time-series database designed for monitoring and alerting. It collects metrics from different services and components running within CP4I, enabling real-time observability.

Grafana – A visualization tool that integrates with Prometheus to create dashboards for monitoring system performance, resource utilization, and application health.

A. Jaeger → Incorrect. Jaeger is used for distributed tracing, not core monitoring.

D. Logstash → Incorrect. Logstash is used for log processing and forwarding, primarily in ELK stacks.

E. Kibana → Incorrect. Kibana is a visualization tool but is not the primary monitoring tool in CP4I; Grafana is used instead.

IBM Cloud Pak for Integration Monitoring Documentation

Prometheus Official Documentation

Grafana Official Documentation

Explanation of Other Options: IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

Select all that apply

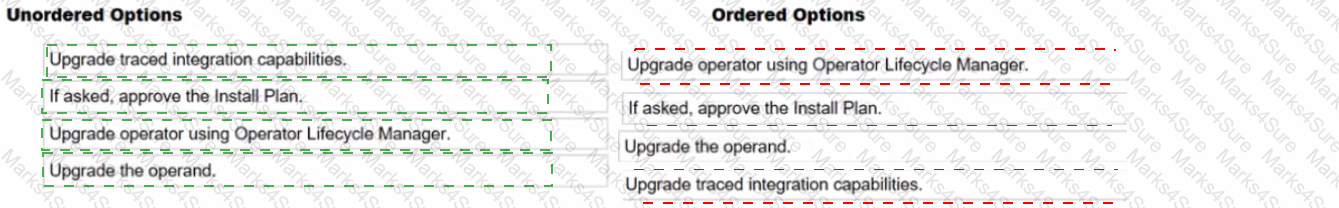

What is the correct sequence of steps of how a multi-instance queue manager failover occurs?

1️ ⃣ The shared file system releases the locks originally held by the active instance.

When the active IBM MQ instance fails, the shared storage system releases the file locks, allowing the standby instance to take over.

2️ ⃣ The standby instance acquires the locks and starts.

The standby queue manager detects the failure, acquires the released locks, and promotes itself to the active instance .

3️ ⃣ Kubernetes Pod readiness probe detects the ready container has changed and redirects network traffic.

OpenShift/Kubernetes detects that the active instance has changed and updates its internal routing to redirect connections to the new active queue manager.

4️ ⃣ IBM MQ clients reconnect.

MQ clients reconnect to the new active queue manager using automatic reconnect mechanisms such as Client Auto Reconnect (MQCNO) or channel reconnect options .

Comprehensive Detailed Explanation:

In IBM Cloud Pak for Integration (CP4I) v2021.2 , multi-instance queue managers use a shared file system to enable failover. The failover process follows these key steps:

Release of Locks:

The active queue manager holds a lock on shared storage.

When it fails , the lock is released, allowing the standby instance to take over.

Standby Becomes Active:

The standby queue manager acquires the released locks and promotes itself to active .

Network Traffic Redirection:

Kubernetes/OpenShift detects the pod status change using readiness probes and redirects network traffic to the new active instance.

Client Reconnection:

IBM MQ clients reconnect to the new active instance, ensuring minimal downtime.

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

IBM MQ Multi-Instance Queue Manager Failover

Kubernetes Readiness and Liveness Probes

IBM MQ High Availability Using Shared Storage

Which CLI command will retrieve the logs from a pod?

oc get logs ...

oc logs ...

oc describe ...

oc retrieve logs ...

In IBM Cloud Pak for Integration (CP4I) v2021.2, which runs on Red Hat OpenShift, administrators often need to retrieve logs from pods to diagnose issues or monitor application behavior. The correct OpenShift CLI ( oc ) command to retrieve logs from a specific pod is:

sh

CopyEdit

oc logs < pod_name >

This command fetches the logs of a running container within the specified pod. If a pod has multiple containers, the -c flag is used to specify the container name:

sh

CopyEdit

oc logs < pod_name > -c < container_name >

A. oc get logs → Incorrect. The oc get command is used to list resources (such as pods, deployments, etc.), but it does not retrieve logs.

C. oc describe → Incorrect. This command provides detailed information about a pod, including events and status, but not logs.

D. oc retrieve logs → Incorrect. There is no such command in OpenShift CLI.

IBM Cloud Pak for Integration Logging and Monitoring

Red Hat OpenShift CLI ( oc ) Reference

IBM Cloud Pak for Integration Troubleshooting

Explanation of Other Options: IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

Which statement describes the Aspera High Speed Transfer Server (HSTS) within IBM Cloud Pak for Integration?

HSTS allows an unlimited number of concurrent users to transfer files of up to 500GB at high speed using an Aspera client.

HSTS allows an unlimited number of concurrent users to transfer files of up to 100GB at high speed using an Aspera client.

HSTS allows an unlimited number of concurrent users to transfer files of up to 1TB at highs peed using an Aspera client.

HSTS allows an unlimited number of concurrent users to transfer files of any size at high speed using an Aspera client.

IBM Aspera High-Speed Transfer Server (HSTS) is a core component of IBM Cloud Pak for Integration (CP4I) that enables secure, high-speed file transfers over networks, regardless of file size, distance, or network conditions.

HSTS does not impose a file size limit , meaning users can transfer files of any size efficiently.

It uses IBM Aspera’s FASP (Fast and Secure Protocol) to achieve transfer speeds significantly faster than traditional TCP-based transfers , even over long distances or unreliable networks.

HSTS allows an unlimited number of concurrent users to transfer files using an Aspera client .

It ensures secure, encrypted, and efficient file transfers with features like bandwidth control and automatic retry in case of network failures.

A. HSTS allows an unlimited number of concurrent users to transfer files of up to 500GB at high speed using an Aspera client. (Incorrect)

Incorrect file size limit – HSTS supports files of any size without restrictions.

B. HSTS allows an unlimited number of concurrent users to transfer files of up to 100GB at high speed using an Aspera client. (Incorrect)

Incorrect file size limit – There is no 100GB limit in HSTS.

C. HSTS allows an unlimited number of concurrent users to transfer files of up to 1TB at high speed using an Aspera client. (Incorrect)

Incorrect file size limit – There is no 1TB limit in HSTS.

D. HSTS allows an unlimited number of concurrent users to transfer files of any size at high speed using an Aspera client. (Correct)

Correct answer – HSTS does not impose a file size limit , making it the best choice.

Analysis of the Options:

IBM Aspera High-Speed Transfer Server Documentation

IBM Cloud Pak for Integration - Aspera Overview

IBM Aspera FASP Technology

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

Which statement is true about enabling open tracing for API Connect?

Only APIs using API Gateway can be traced in the Operations Dashboard.

API debug data is made available in OpenShift cluster logging.

This feature is only available in non-production deployment profiles

Trace data can be viewed in Analytics dashboards

Open Tracing in IBM API Connect allows for distributed tracing of API calls across the system, helping administrators analyze performance bottlenecks and troubleshoot issues. However, this capability is specifically designed to work with APIs that utilize the API Gateway .

Option A (Correct Answer) : IBM API Connect integrates with OpenTracing for API Gateway, allowing the tracing of API requests in the Operations Dashboard . This provides deep visibility into request flows and latencies.

Option B (Incorrect) : API debug data is not directly made available in OpenShift cluster logging. Instead, API tracing data is captured using OpenTracing-compatible tools.

Option C (Incorrect) : OpenTracing is available for all deployment profiles, including production, not just non-production environments.

Option D (Incorrect) : Trace data is not directly visible in Analytics dashboards but rather in the Operations Dashboard where administrators can inspect API request traces.

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

IBM API Connect Documentation – OpenTracing

IBM Cloud Pak for Integration - API Gateway Tracing

IBM API Connect Operations Dashboard Guide

Which statement is true regarding tracing in Cloud Pak for Integration?

If tracing has not been enabled, the administrator can turn it on without the need to redeploy the integration capability.

Distributed tracing data is enabled by default when a new capability is in-stantiated through the Platform Navigator.

The administrator can schedule tracing to run intermittently for each speci-fied integration capability.

Tracing for an integration capability instance can be enabled only when de-ploying the instance.

In IBM Cloud Pak for Integration (CP4I) , distributed tracing allows administrators to monitor the flow of requests across multiple services. This feature helps in diagnosing performance issues and debugging integration flows.

Tracing must be enabled during the initial deployment of an integration capability instance .

Once deployed, tracing settings cannot be changed dynamically without redeploying the instance.

This ensures that tracing configurations are properly set up and integrated with observability tools like OpenTelemetry, Jaeger, or Zipkin.

A. If tracing has not been enabled, the administrator can turn it on without the need to redeploy the integration capability. (Incorrect)

Tracing cannot be enabled after deployment. It must be configured during the initial deployment process .

B. Distributed tracing data is enabled by default when a new capability is instantiated through the Platform Navigator. (Incorrect)

Tracing is not enabled by default. The administrator must manually enable it during deployment.

C. The administrator can schedule tracing to run intermittently for each specified integration capability. (Incorrect)

There is no scheduling option for tracing in CP4I. Once enabled, tracing runs continuously based on the chosen settings.

D. Tracing for an integration capability instance can be enabled only when deploying the instance. (Correct)

This is the correct answer. Tracing settings are defined at deployment and cannot be modified afterward without redeploying the instance.

Analysis of the Options:

IBM Cloud Pak for Integration - Tracing and Monitoring

Enabling Distributed Tracing in IBM CP4I

IBM OpenTelemetry and Jaeger Tracing Integration

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

What is the result of issuing the following command?

oc get packagemanifest -n ibm-common-services ibm-common-service-operator -o*jsonpath= ' {.status.channels![*].name} '

It lists available upgrade channels for Cloud Pak for Integration Foundational Services.

It displays the status and names of channels in the default queue manager.

It retrieves a manifest of services packaged in Cloud Pak for Integration operators.

It returns an operator package manifest in a JSON structure.

jsonpath= ' {.status.channels[*].name} '

performs the following actions:

oc get packagemanifest → Retrieves the package manifest information for operators installed on the OpenShift cluster.

-n ibm-common-services → Specifies the namespace where IBM Common Services are installed.

ibm-common-service-operator → Targets the IBM Common Service Operator , which manages foundational services for Cloud Pak for Integration.

-o jsonpath= ' {.status.channels[*].name} ' → Extracts and displays the available upgrade channels from the operator’s status field in JSON format.

The IBM Common Service Operator is part of Cloud Pak for Integration Foundational Services .

The status.channels[*].name field lists the available upgrade channels (e.g., stable , v1 , latest ).

This command helps administrators determine which upgrade paths are available for foundational services.

Why Answer A is Correct:

B. It displays the status and names of channels in the default queue manager. → Incorrect

This command is not related to IBM MQ queue managers.

It queries package manifests for IBM Common Services operators , not queue managers.

C. It retrieves a manifest of services packaged in Cloud Pak for Integration operators. → Incorrect

The command does not return a full list of services; it only displays upgrade channels .

D. It returns an operator package manifest in a JSON structure. → Incorrect

The command outputs only the names of upgrade channels in plain text, not the full JSON structure of the package manifest.

Explanation of Incorrect Answers:

IBM Cloud Pak Foundational Services Overview

OpenShift PackageManifest Command Documentation

IBM Common Service Operator Details

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

How can OLM be triggered to start upgrading the IBM Cloud Pak for Integration Platform Navigator operator?

Navigate to the Installed Operators, select the Platform Navigator operator and click the Upgrade button on the Details page.

Navigate to the Installed Operators, select the Platform Navigator operator, select the operand instance, and select Upgrade from the Actions list.

Navigate to the Installed Operators, select the Platform Navigator operator and select the latest channel version on the Subscription tab.

Open the Platform Navigator web interface and select Update from the main menu. In IBM Cloud Pak for Integration (CP4I) v2021.2 , the Operator Lifecycle Manager (OLM) manages operator upgrades in OpenShift. The IBM Cloud Pak Platform Navigator operator is updated through the OLM subscription mechanism , which controls how updates are applied.

Correct Answer: C To trigger OLM to start upgrading the Platform Navigator operator ,

Which two of the following support Cloud Pak for Integration deployments?

IBM Cloud Code Engine

Amazon Web Services

Microsoft Azure

IBM Cloud Foundry

Docker

IBM Cloud Pak for Integration (CP4I) v2021.2 is designed to run on containerized environments that support Red Hat OpenShift , which can be deployed on various public clouds and on-premises environments. The two correct options that support CP4I deployments are:

Amazon Web Services (AWS) (Option B) ✅

AWS supports IBM Cloud Pak for Integration via Red Hat OpenShift on AWS (ROSA) or self-managed OpenShift clusters running on AWS EC2 instances.

CP4I components such as API Connect, App Connect, MQ, and Event Streams can be deployed on OpenShift running on AWS.

What is one method that can be used to uninstall IBM Cloud Pak for Integra-tion?

Uninstall.sh

Cloud Pak for Integration console

Operator Catalog

OpenShift console

Uninstalling IBM Cloud Pak for Integration (CP4I) v2021.2 requires removing the operators, instances, and related resources from the OpenShift cluster. One method to achieve this is through the OpenShift console , which provides a graphical interface for managing operators and deployments.

The OpenShift Web Console allows administrators to:

Navigate to Operators → Installed Operators and remove CP4I-related operators.

Delete all associated custom resources (CRs) and namespaces where CP4I was deployed.

Ensure that all PVCs (Persistent Volume Claims) and secrets associated with CP4I are also deleted.

This is an officially supported method for uninstalling CP4I in OpenShift environments.

Why Option D (OpenShift Console) is Correct:

A. Uninstall.sh → ❌ Incorrect

There is no official Uninstall.sh script provided by IBM for CP4I removal.

IBM’s documentation recommends manual removal through OpenShift.

B. Cloud Pak for Integration console → ❌ Incorrect

The CP4I console is used for managing integration components but does not provide an option to uninstall CP4I itself .

C. Operator Catalog → ❌ Incorrect

The Operator Catalog lists available operators but does not handle uninstallation .

Operators need to be manually removed via the OpenShift Console or CLI .

Explanation of Incorrect Answers:

Uninstalling IBM Cloud Pak for Integration

OpenShift Web Console - Removing Installed Operators

Best Practices for Uninstalling Cloud Pak on OpenShift

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

OpenShift supports forwarding cluster logs to which external third-party system?

Splunk.

Kafka Broker.

Apache Lucene.

Apache Solr.

In IBM Cloud Pak for Integration (CP4I) v2021.2 , which runs on Red Hat OpenShift , cluster logging can be forwarded to external third-party systems , with Splunk being one of the officially supported destinations.

OpenShift Cluster Logging Operator enables log forwarding.

Supports forwarding logs to various external logging solutions , including Splunk .

Uses the Fluentd log collector to send logs to Splunk ' s HTTP Event Collector (HEC) endpoint.

Provides centralized log management, analysis, and visualization.

B. Kafka Broker – OpenShift does support sending logs to Kafka, but Kafka is a message broker, not a full-fledged logging system like Splunk.

C. Apache Lucene – Lucene is a search engine library, not a log management system.

D. Apache Solr – Solr is based on Lucene and is used for search indexing, not log forwarding.

OpenShift Log Forwarding to Splunk

IBM Cloud Pak for Integration – Logging and Monitoring

Red Hat OpenShift Logging Documentation

OpenShift Log Forwarding Features: Why Not the Other Options? IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References

What type of authentication uses an XML-based markup language to exchange identity, authentication, and authorization information between an identity provider and a service provider?

Security Assertion Markup Language (SAML)

IAM SSO authentication

lAMviaXML

Enterprise XML

Security Assertion Markup Language (SAML) is an XML-based standard used for exchanging identity, authentication, and authorization information between an Identity Provider (IdP) and a Service Provider (SP) .

SAML is widely used for Single Sign-On (SSO) authentication in enterprise environments, allowing users to authenticate once with an identity provider and gain access to multiple applications without needing to log in again.

User Requests Access → The user tries to access a service (Service Provider).

Redirect to Identity Provider (IdP) → If not authenticated, the user is redirected to an IdP (e.g., Okta, Active Directory Federation Services).

User Authenticates with IdP → The IdP verifies user credentials.

SAML Assertion is Sent → The IdP generates a SAML assertion (XML-based token) containing authentication and authorization details.

Service Provider Grants Access → The service provider validates the SAML assertion and grants access.

How SAML Works: SAML is commonly used in IBM Cloud Pak for Integration (CP4I) v2021.2 to integrate with enterprise authentication systems for secure access control .

B. IAM SSO authentication → ❌ Incorrect

IAM (Identity and Access Management) supports SAML for SSO , but " IAM SSO authentication " is not a specific XML-based authentication standard.

C. IAM via XML → ❌ Incorrect

There is no authentication method called " IAM via XML. " IBM IAM systems may use XML configurations, but IAM itself is not an XML-based authentication protocol .

D. Enterprise XML → ❌ Incorrect

" Enterprise XML " is not a standard authentication mechanism. While XML is used in many enterprise systems, it is not a dedicated authentication protocol like SAML .

Explanation of Incorrect Answers:

IBM Cloud Pak for Integration - SAML Authentication

Security Assertion Markup Language (SAML) Overview

IBM Identity and Access Management (IAM) Authentication

IBM Cloud Pak for Integration (CP4I) v2021.2 Administration References:

TESTED 10 May 2026